One of scientific research main challenges include the lack

of experimental design and execution which meet a significant testing

procedure. I myself have experienced these types of issues arise in my own laboratory

experience. One of the first warning signs of the issues was that the

experimental design never followed the null hypothesis significance testing

procedure. A common day in the lab would consist of designing an experiment

that included the appropriate controls, instead of designing an experiment

based on what type of statistical test would need to be performed (t-test,

ANOVA). Secondly, one should form an if/then statement that contains the

statistical null and the alternate hypothesis based on predicted effect. In

addition, alpha and beta threshold errors were not considered prior to an

experimental design, well –up until the beginning of this course this was not

been done. It seems that as a young scientist I had never heard of the concept

of designing an experiment where the sample size, exclusion criteria was

planned out prior to the execution of the experiment. It was truly shocking and

unfortunate to find out in a class that I had been doing this wrong the whole

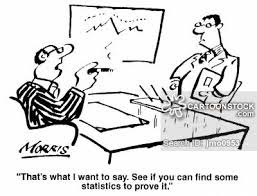

time. I was just like the scientist described in the comic below. Finding a way

to use statistics to prove something I had preconceived in my mind as being

correct.

One of the major challenges in statistics is the lack of knowledge

of the appropriate set up of an experiment. This may be due to the complex language

that is used like, error threshold, explanatory groups, degrees of freedom.

Which to us now appear as a second language, where once words we did not truly understand.

I think most importantly is the issue of not knowing when to begin thinking of

statistics in our research. Now it is ingrained in our brains, we must

determine the type of data we are acquiring to determine the test we will perform.

However, just last year I was collecting data, analyzing it and call it a day.

In order to overcome of the major challenges in statistics,

we need to educate scientist early in their careers. Requiring these type of

course as part of the grants that professors take, or as a department

graduation requirement. I know in my case; this was an elective. Cannot image

what my final PhD work would be like if I did not know what I know today.

This whole post sounds like it came straight from my brain, because that's honestly what I think about this whole statistics course. Things that I should have known two years ago. Why was I doing research four years ago?? Oh my gosh, how didn't I know anything about experimental design in college?? How haven't I taken a real statistics class since high school???

ReplyDeleteBut it's not just us! When I was analyzing my first data, I remember asking someone why I should use an ANOVA, and they said, "I'm not sure, I think it's because you have a lot of bars?" I'm not even kidding. I remember it exactly, because it seemed crazy that someone who ALREADY had their PhD didn't know something that seemed, even to me in my early stages of science, to be pretty important.

Basically, I completely agree with you that we should emphasize statistics EARLY on in science education, because it sucks taking a class and constantly thinking, "Wow, that would have been really helpful to understand X years ago"

I SECOND ALL OF THIS. But really, earlier this semester I was reflecting on some of my earlier attempts at science and was absolutely horrified by some of the statistical atrocities my naive high school and undergrad self committed. For example, I had a science fair project in my sophomore year of high school that relied entirely on a TON of multiple t-test comparisons when I should have been using ANOVA. And it won awards at the STATE and NATIONAL level science fairs. Not only did I think that multiple t-tests were a good idea but also none of the professional scientists that were judging it called me out on it. Oh the horror.

ReplyDeleteI entirely agree with you and Jessie that we need to start proper statistical training MUCH earlier than the second year of a PhD program. Not only is it useful to know, but it makes me question the quality of all data I've produced up until this point (So thanks, biostats, for causing such a scientific existential crisis). Like Jessie, I have also met older grad students/post docs/faculty members who couldn't explain to me why I should use certain statistical tests. And goodness knows I've met very few scientists who properly randomize their experimental design.

I know we as scientists are often very settled in our ways, but I think we need some good some way to provide "continuing education" classes to grad students/post docs/PIs in practical experimental design and statistics. I feel like much of what we do in science is learning "on the job", so if your mentors don't know what they're doing in the realm of statistics, you're totally screwed. Providing some kind of training in these areas might help level the playing field in terms of how people design and analyze experimental data.